I was so surprised when I read that Microsoft developers implemented lazy commit in SQL Server 2014. Why I was surprised? Because it is totally against one of the main four relational database management systems principles (ACID) – durability. Durability in short words means that if data was changed, it was changed and you can rely on that fact. In practice, if the database engine returns the success code after the commit operation, the whole transaction is considered done and restarting the server a millisecond later cannot change that.

I was so surprised when I read that Microsoft developers implemented lazy commit in SQL Server 2014. Why I was surprised? Because it is totally against one of the main four relational database management systems principles (ACID) – durability. Durability in short words means that if data was changed, it was changed and you can rely on that fact. In practice, if the database engine returns the success code after the commit operation, the whole transaction is considered done and restarting the server a millisecond later cannot change that.

And now there is the delayed durability feature available in SQL Server 2014 … besides it breaks one of the ACID properties, it can do a lot of good.

Concept

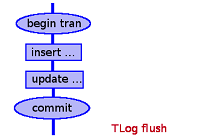

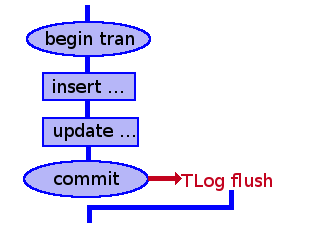

The delayed transaction durability feature changes the time when the transaction log records are saved to disk. Normally, it is done when a transaction is committed if IMPLICIT_TRANSACTIONS option is off or after each DML operation if IMPLICIT_TRANSACTIONS is on. Nevertheless, the log entries go to disk when the operation is considered committed.

The below diagram shows when TLog flush operation takes place and you can infer from it that next operation can be executed when TLog flush is finished.

When the delayed durability is on, it may happen later, not necessarily on the commit. In result, there is less work required to be done before returning the success code after SQL statement – saving transaction log entries can be done later.

Hence, enabling the delayed durability feature may improve performance of updates and inserts.

Risks

Obviously, as the delayed transaction durability feature breaks durability of transactions result, it should be used very carefully and only after deep analysis of the case and accepting a potential data loss. Such scenario may happen for example when the server is restarted before flushing transaction log entries to disk. It is a serious case and it excludes usage of the feature in most of the systems.

For example, risking losing some data changes is unacceptable in probably all financial systems. Due to that fact, there is no point to even think about using this feature in such case.

Potential area of usage

As I stated above, the delayed durability can cause data loss in some scenarios. Fortunately, there is a small group of systems that can live with it. I can image an internet service for rating movies. After watching a movie, one can give a score to the movie. Rating is presented on a web page. In case of that system, losing a few seconds of points given is not a big deal. Probably nobody will notice the missing data including the person who rated the movie.

I hope you agree, there are some cases in which the feature can be used. I guess it may noticeably improve performance of DML operations. If time permits, I will try to make a performance test in one of the next articles.

Using delayed durability

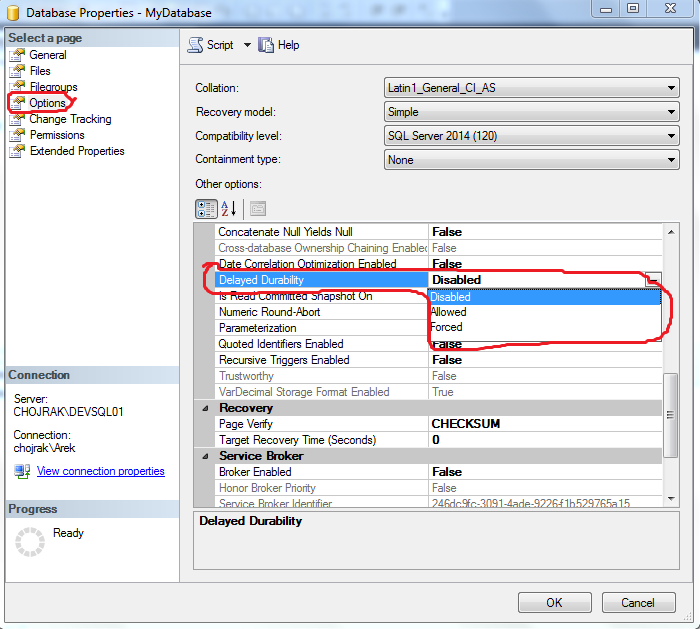

To take full advantage of the feature, I need to allow using it on the database level. To do that, I can perform the right mouse button click on the database for which I want to enable it, then go to Options tab and switch Delayed Durability to Allowed or Forced.

Disabled option disables the feature no matter what is set anywhere else.

Allowed options defers decision about using the feature to commit level and atomic block level.

Forced option forces using the feature.

Another way to enable it is to use ALTER DATABASE command:

ALTER DATABASE MyDatabase SET DELAYED_DURABILITY = ALLOWED;

Once the feature is allowed to use on the database level, I can execute transactions with delayed durability. The script below demonstrates that:

BEGIN TRAN;

INSERT INTO test (id, name) VALUES (1, 'simple test');

INSERT INTO test (id, name) VALUES (2, 'second row');

COMMIT WITH (DELAYED_DURABILITY = ON);

Commit option WITH (DELAYED_DURABILITY = ON) tells SQL Server engine to use the delayed durability. Obviously, there is no noticeable difference for the script executor between DELAYED_DURABILITY = ON and DELAYED_DURABILITY = OFF. The difference is hidden deep inside the engine. Simply saying, there is no gurantee that the commit statement makes the change durable but to compensate that the script might execute a little bit faster than without the delayed durability.

Last words

I know, the effect of the feature does not seem to be spectacular at this moment but this is what should have been expected – it is a performance improvement feature not a business feature for developers.

I have also executed a performance test of the Delayed Durability feature.

This article does not exhaust the subject. For more information, risks, advices, constraints please refer to MSDN documentation.