In DBAs world there are some tasks that are usually done as quickly as possible without giving them enough attention. Database schema modeling is widely understood as important and even if not everyone puts enough effort to it (mostly because of time restrictions), it is agreed it should be done well. I am sure development DBAs know that. I hope most of development team leads and developers agree too. If a schema design is screwed up at the beginning, at some point in time developing new features will become expensive and difficult or the system will start experiencing performance issues. Then it will be very difficult to straighten that out - make a new schema design, update or even rewrite data layer in the application. It is known and understood.

In DBAs world there are some tasks that are usually done as quickly as possible without giving them enough attention. Database schema modeling is widely understood as important and even if not everyone puts enough effort to it (mostly because of time restrictions), it is agreed it should be done well. I am sure development DBAs know that. I hope most of development team leads and developers agree too. If a schema design is screwed up at the beginning, at some point in time developing new features will become expensive and difficult or the system will start experiencing performance issues. Then it will be very difficult to straighten that out - make a new schema design, update or even rewrite data layer in the application. It is known and understood.

One of the tasks belonging to schema modeling is choosing correct data types for tables' columns. And this one is widely underestimated at the beginning of designing and development but it also may have big consequences later on. I would like to focus on two aspects: choosing a datatype and a size.

Why it is important

Oh, there are a few reasons but two are the most important. The first one is a risk of high severity issue on production. Imagine a social security system which does not allow registering a citizen with a last name longer than 30 characters. Thirty characters seems to be enough. And probably is … in most cases. But there are women with a very long last name that have married a man with a long last name too. Some of them decided to keep both names. It is not so difficult to exceed those 30 characters in such cases.

Another example is a financial system. If transactionValue column is 3 digits long, but some of transactions in the system exceed $999, the database is unable to store them and the application rejects them. It causes a real financial loss.

What would your boss say in such case? It could sound like "I need a fix. NOOOOW!".

And here we have come to the second reason - a difficulty of fixing it. Making lastName column wider from 30 characters to 50 or 100 is not very hard but extending transactionValue might be. In a financial system, money amounts are stored in multiple places so it will not be a single column operation, but many more like taxValue, feeValue and probably some aggregations too. The whole task gets more complex when a column needed to be extended is a primary key referenced by foreign keys in multiple tables. Then, all the FKs need to be dropped, columns in all child tables extended, FKs recreated. What if a traffic coming to the system cannot be stopped and referential integrity needs to be kept with FKs temporarily dropped? Brrrr… I don’t wish this to happen to anybody.

Those two reasons make me take "choose datatypes" task seriously.

General advices

In the first place, I try to avoid future modifications in a data model. I want it to be flexible enough to store all reasonable data that it was purposed for. It means, I prefer to choose more flexible datatype if there is a high-enough risk the flexibility might be needed.

The same applies to a column’s length. If I suppose 100 characters should be enough, I give 200 just to be safer.

Other side of the topic is a cost of flexibility. There is a thin line between flexibility and overdesign. It is easy to cross that line with a data model. Choosing varchar/varchar2(100) to store a number of one woman’s children is definitely an overdesign. Disk space is another factor that needs to be taken under consideration. Ten digits number consumes more disk space when stored as a text than a number. Also seeking and scanning an index built on a numeric column is much more efficient.

As usual, making the decision is choosing an option with advantages and disadvantages. Important is to know them and choose wisely.

Below is a list of my preferences for storing different types of information.

Names and addresses

Datatype – nvarchar/nvarchar2 seem to be the right choice. Even if the system is not going to support multiple languages, it still might need to occasionally create an account for a person that has a foreign name or address.

Length – to determine it, I image a very long value for the field and make the field 5 times wider. For example, the longest first name I know has 13 characters (Hermenegilda), but someone may want to type also a middle name in the field which is common and it also might be very long plus a space between. It gives 13+1+13=27 characters. As I mentioned earlier, I make it 5 times wider just to be sure the names will fit - I don’t know all long names in the world but for sure there are longer than 13 characters. 5 * 27 = 135. I would make it 140.

Writing this article, I decided to find the longest name in the world. Full name is 226 long, first plus middle names – 218 characters. Although, I think being prepared for serving a holder of the longest name in the world is not critical, it does not make much difference to use 250 as the length for a first name.

Fixed format identifiers

Data like postal codes, phone numbers, tax ids, social security numbers, bank account numbers, car registration identifier are well defined fixed format values. Yeah … they seem to be. But they are not.

Datatype – Although phone numbers are NUMBERs, people tends to type it with a plus, parenthesis and spaces e.g. +1 (12) 345 67 89. If for some reasons you need them to be well formed in your system, you can split the whole number to parts like: country code, extension but I usually store them as varchar/varchar2. The same rules apply to all these identifiers. Bank account number is also a number but international format (IBAN) adds two-letter country code at the beginning.

Even if currently a social security number in your country is a number like in mine, there is no guarantee it will still be the same in 2-5 years. Authorities may decide to add a letter region code or just allow letters to extend the range of options.

Length – As these ids are "fixed format" data, there is no need to be so flexible as with first/last names and multiple size by 5. If you know that a bank account number in IBAN format is 34 characters, 40-50 characters length seems reasonable as the length of the column. Of course, it may not apply if it is a bank system and you want to enforce correctness on the database schema level. Nevertheless, having a wider column in the database, does not prevent validating data in the application!

Surrogate keys

They are internal identifiers used to create primary keys. Because of their origination and purpose there are no many business requirements for them. It does not change a fact, making a mistake choosing datatype and length may have a very serious consequences.

Datatype – number. Always a number. Primary keys are created on those columns as well as foreign keys and indexes. Index seeking and scanning on them should be as efficient as possible. That is why a number is my choice.

Length – Long enough to make you sleep well at night not thinking when the system will exhaust the numbers’ pool. Those columns are probably the most difficult columns to extend in a database. It is because of PKs and FKs. While I can accept extending any other database column, I would not like to touch any of these after creation. Dropping FKs, PK, indexes, extending the column, its referencing columns in other tables, recreating PK, FKs are not anything I would like to do. Especially, if the database has to still handle incoming traffic. If I think a particular table will not have more than X inserts for its whole life (30 years?), than I choose at least 1000 * X as a length of the column.

Ids for dictionary tables

Datatype – numeric. These values exist in many tables, have foreign keys and indexes on them. To save disk space and increase performance I do not use varchars or chars.

Length - Dictionaries are opposite kind of tables than tables with transactions, users etc. A cardinality is much more predictable. A number of countries is not constant but it will not double easily. Even if amount of rows is more prone to change (dictionary with color names, account statuses), it is not difficult to determine an order of magnitude.

There are about 190 countries in the world so number(3) should be enough for countries. It is not a rule for all dictionaries. If now I have 3 account statuses defined, it is safer to use number(2) instead of number(1) as it more depends on system functionalities than real objects. That is why they is more chance of adding more.

Money amounts

Transaction values, tax values, balances, yearly revenues are various types of money amounts. They differ on a maximum reasonable value.

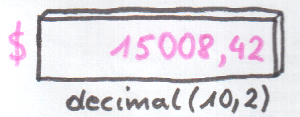

Datatype – Definitely not a floating-point number. During money calculations, a developer should always have a control on rounding. It is money! That is why fixed precision and scale numbers are the only valid choice. SQL Server has money and smallmoney datatypes. Acceptable is also decimal(p, s). In Oracle number(p, s) seems to be the right choice.

Length – There are two sides of this subject: a number of decimal digits and a scale (a number of digits to the right side of the decimal point). If a field needs to store a price of a single cinema ticket, I can assume, 3 decimal digits should be more than enough, but … if the system may need to support different currencies, 3 digits is not a good choice. There are currencies like Vietnamese Dongs (VND) which have a very low conversion rate to most of other currencies. For example 10 USD = 200 000+ VND. Keeping that in mind, SQL Server money datatype makes sense even for low price items.

Money amounts are often aggregated and stored separately like monthly/yearly sums, total purchases’ values for a region. For those columns, you need to be more careful. Estimating possible values there based on a predicted number of transactions, a maximum reasonable transaction value are factors that help to get a final size of the column. Just multiplying those two values should be enough.

Going back to the cinema ticket example, for a single transaction I would use 7 decimal digits which is 9 999 999 maximum value (because of currencies like VND). For aggregations like single cinema monthly/yearly/total revenue:

10^7 (max ticket price) * 1000 (tickets sold daily) * 365 (days in a year) * 30 (years of the system’s life) = 10^14.

It fits in SQL Server’s money datatype which is able to store up to about 10^15. In this case I do not feel a need to use anything wider. Safety margin is actually already included in all factors used in calculation. 10^7 as a cinema ticket price is a very safe assumption for most of the countries. The same about 30 years of using the system.

When it comes to a scale, majority depends on the currency. Here I trust Microsoft, money datatype uses 4 digits to the right side of the decimal point. I have never encountered a problem caused by this even working with many currencies.

Summary

For sure it does not exhaust the topic. Common sense and foresight are good partners in choosing datatypes. I hope I succeeded in presenting differences between various types of information needed to be stored in a database. Some of them require more flexibility like names, some of them do not like bank account numbers. While there is no single the best answer of which datatype should be used in a particular case, there are some good and bad choices. It is often worth to spend some time to not pick from the bad ones.